Within the realm of digital communications, digital voice assistants are gaining momentum, as they permit natural hands-free interactions, allowing us to communicate easily with voice-enabled devices. The beneficial strategy in a customer-centric functional scenario would be to deploy voice assistants and voice bots (computer programs that handle voice conversations and are driven by natural language processing and artificial intelligence. Examples of well-known voice assistants are Amazon’s Alexa, Google Assistant, Microsoft’s Cortana, Samsung’s Bixby, and Apple’s Siri.

For the above scenarios, from a developer’s perspective, certified development kits serve a very useful purpose. They can expeditiously and cost effectively set up solutions/applications and prototypes for smart gateways, smart lighting/plugs, IoT sound sensors, smart speakers, smart thermostats, and other devices that support voice services.

The ensuing discussion will specifically focus on the voice-enabled front-end audio system kit for Alexa-enabled products offered by Microchip Technology Inc. (through its Microsemi subsidiary), the Microchip AcuEdge ZLK38AVS2 Development Kit for Amazon AVS.

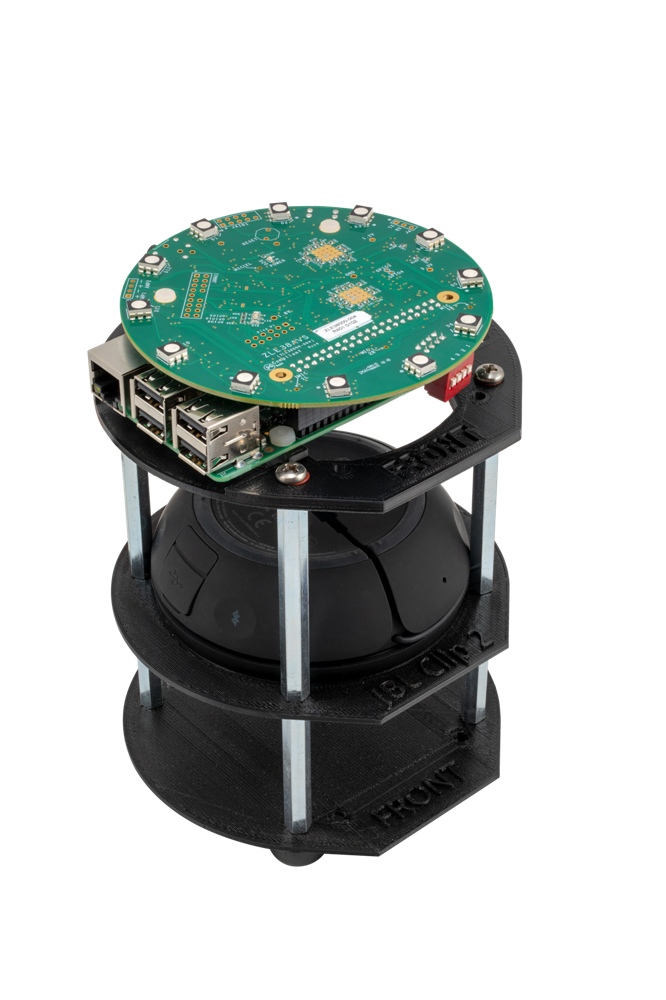

Fig. A. Microchip’s AcuEdge ZLK38AVS2 Development Kit

Fig. A. Microchip’s AcuEdge ZLK38AVS2 Development Kit

The development kit consists of:

- ZLE38000-004 evaluation board

- Raspberry Pi 3

- 32GB Micro SD card

- 5V 2A micro USB power supply

- JBL Clip 2 speaker

- Plastic stand

- Hardware assembly.

The kit is equipped with a Timberwolf ZL38063 audio processor, powered by AcuEdge technology and Sensory’s TrulyHandsFree “Alexa” wake-word engine, for embedded and cloud based automatic speech recognition (ASR), and it supports audio enhancement features and functions such as:

- Trigger word and command phrase detection, including preloaded “Alexa” wake-word detection

- Two-way full duplex voice communication

- Audio barge-in support and trigger word detection during playback

- Four digital MEMS (Micro Electrical-Mechanical System) microphones on board – one microphone hands-free and two or three microphones far-field (Amazon AVS certified) linear or triangular arrays with 180- and 360-degree audio pick-up with stereo acoustic echo cancellation and ambient noise reduction.

- The multiple microphones in the array help with beamforming and Direction of Arrival (DOA) estimation to locate the source of speech and differentiate it from background noise for enhanced audio pick up and increased voice clarity

- De-reverberation for increased voice recognition accuracy and intelligibility

- Smart automatic gain control (AGC) for improved voice recognition, even at extended distances

- 12 RGB LEDs in a ring on the unit for status indicators, such as the recording status

Let us, now conduct the functional testing of the Microchip AcuEdge ZLK38AVS2 Development Kit for Amazon AVS. During the testing we will use the wake word, “Alexa,” as this kit is engineered for evaluation of voice-enabled front-end audio systems for Alexa-enabled products.

Functional Testing

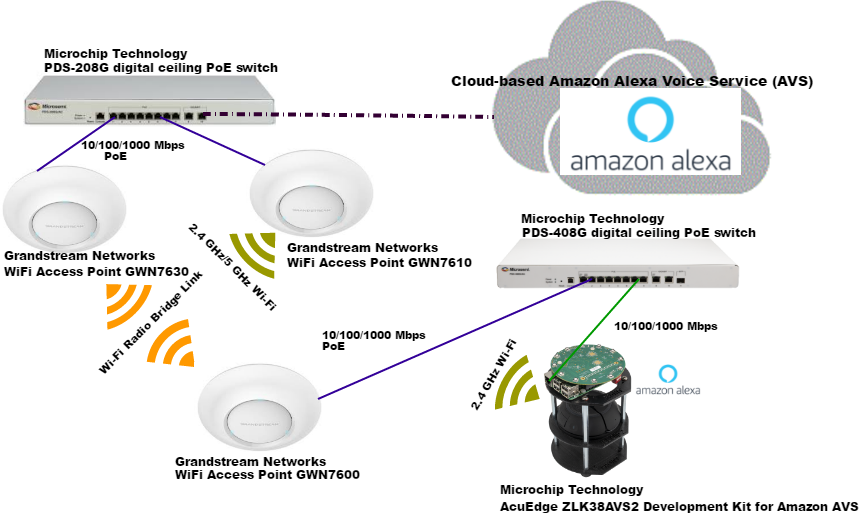

The test setup consisted of the front-end audio development kit, Microchip AcuEdge ZLK38AVS2 Development Kit for Amazon AVS and the following systems (please refer to Fig. B.):

- Microchip Technology’s Microchip PDS-408G Digital Ceiling PoE switch-software release version 1.13 for network connectivity and PoE delivery

- Grandstream Networks GWN7630 802.11ac Wave-2 Wi-Fi access point running software version 1.0.11.8

- Grandstream Networks GWN7600 802.11ac Wave-2 Wi-Fi access point running software version 1.0.11.8

- Microchip Technology’s Microchip PDS-208G Digital Ceiling PoE switch with software release version 2.53 for network connectivity and PoE delivery

- Grandstream Networks GWN7610 802.11ac Wi-Fi access point running software version 1.0.11.8

Fig. B. Functional testing of Microchip AcuEdge ZLK38AVS2 Development Kit

Fig. B. Functional testing of Microchip AcuEdge ZLK38AVS2 Development Kit

We first assembled the kit with the ZLE38000-004 evaluation board Rev 401 (interfaces with the Raspberry Pi 3), the Raspberry Pi 3 and JBL Clip 2 portable speaker, using the provided installation accessories. We connected the speaker cord to the 3.5mm jack on the ZLE38000-004 board. We then deployed a pre-built Raspberry Pi image (the image has the Amazon Alexa sample application pre-configured to work with the Microchip ZLK38AVS2 kit) based on Raspbian Linux distribution, onto a 32GB SD card for our use with the kit. The image has both VNC and SSH enabled. We inserted the SD card and powered up the Raspberry Pi. In our setup, the Microchip PDS-408G/ PDS-208G digital ceiling PoE switches provided PoE, and network connectivity and Wi-Fi connectivity were provided by the Grandstream networks Wi-Fi access points GWN 7630/GWN7610/GWN7600.

We opened a browser, created and logged into our Amazon developer account. Within the Alexa Voice Service developer console, we clicked on products and created and registered a product by filling in the relevant fields such as: product name, product ID, product type (device with Alexa built-in), end user interaction (Hands-free to allow users to interact with Alexa by using voice at a close distance and Far-field toallow users to interact with Alexa by using their voice from a longer distance), and we created a security profile. Once the product creation and registration were complete, we took note of the product ID and client ID, as we would have to input those values and associate the Alexa sample application running on the kit with our Amazon developer account.

We used VNC to access the Raspberry Pi desktop using the required credentials. We then opened a terminal session on the Raspberry Pi, entered the commands “cd ~/ZLK38AVS” and “make avs_config” and accepted the ensuing license agreements. We were prompted for the product ID and client ID (it is located under “Other devices and platforms” for the product that we registered earlier on the Amazon developer site), and the Alexa sample app installation was completed.

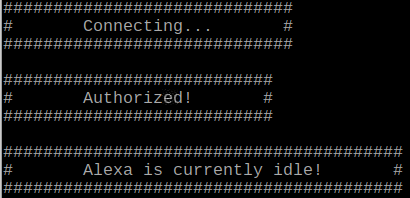

We started the Alexa sample application by using the command “make start_alexa.” The first time the sample application started, it prompted us for a web-based authorization, providing a link and the associated code that needed to be entered to complete the authorization. The authorization and registration were completed, and the kit responded with Connecting …Authorized…Alexa is currently idle on the terminal session (refer to Fig. C.).

Fig. C. ZLK38AVS2 Development Kit – Authorization & Registration

Fig. C. ZLK38AVS2 Development Kit – Authorization & Registration

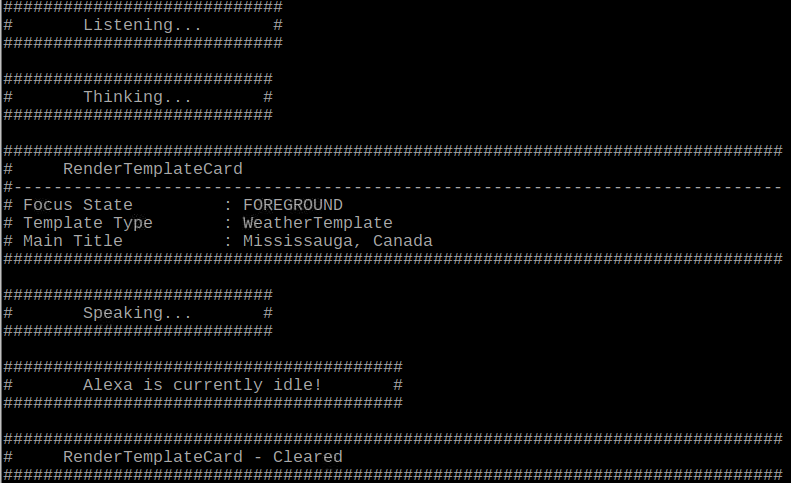

We were up and running in a speedy manner, the development kit was now listening (but we could mute it). It starts recording when the wake word “Alexa” is heard – no audio is stored or sent to the cloud unless the device detects the wake word. We had to simply say the wake word and begin our query. We said, “Alexa, what is the weather in Mississauga now?” The 12 RGB LED ring on the unit indicated the recording status. The recording was sent to the cloud for processing and storage, and the response was sent back to the kit using an SSL encrypted traffic stream (refer to Fig. D.).

Fig.D ZLK38AVS2 Development Kit – Wake Word Alexa

Fig.D ZLK38AVS2 Development Kit – Wake Word Alexa

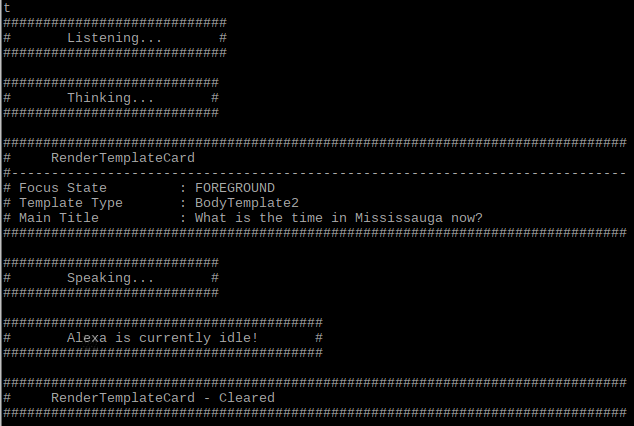

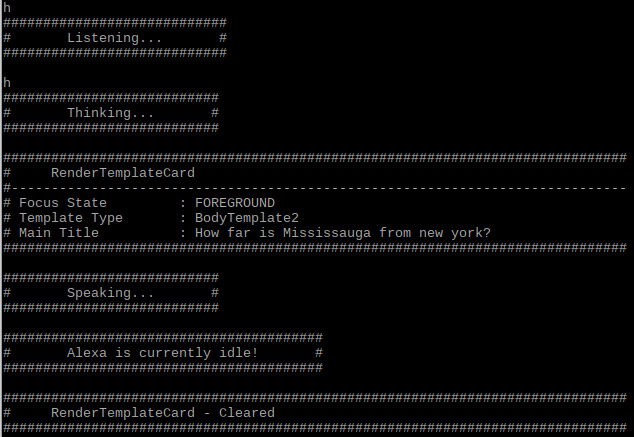

It is also noteworthy that it offered us options such as Tap to talk and Hold to talk. On the terminal, for Tap to talk, we had to press ‘t' and the ENTER key followed by our query, “What is the time in Mississauga now?” (refer to Fig. E.). Next, for Hold to talk, we had to press ‘h’ followed by the ENTER key (simulates holding a button), followed by our query, “How far is Mississauga from New York?” (refer to Fig. F.). We then pressed ‘h’ followed by the ENTER key (simulates releasing a button) all without the wake word.

Fig. E. ZLK38AVS2 Development Kit – Tap-to-Talk

Fig. E. ZLK38AVS2 Development Kit – Tap-to-Talk

Fig. F. ZLK38AVS2 Development Kit – Hold-to-Talk

Fig. F. ZLK38AVS2 Development Kit – Hold-to-Talk

Functions such as the voice communication, audio barge-in during device playback, far-field 360-degree audio pick-up in the presence of interfering noise sources (including external HVAC noise), device playback, pre-loaded “Alexa” wake-word detection all worked satisfactorily. For us in our test setup, the kit represented an excellent smart speaker application.

Conclusion

The front-end audio development kit from Microchip – the Microchip AcuEdge ZLK38AVS2 Development Kit for Amazon AVS – was very easy to set up and test. It supports cloud-based and embedded ASR solutions. This development kit for AVS includes all the building blocks (software, voice processing technologies, chipsets, etc.) that leverage AVS APIs to help developers easily, quickly and cost effectively build and deploy smart speaker prototypes and applications and solutions that leverage Amazon AVS voice support.

For more information, education, and networking opportunities around how businesses are leveraging voice recognition, AI and automation to improve existing business models and develop new ones, don’t miss the Future of Work Expo in Fort Lauderdale, Florida February 12-14, 2020. The event, part of the TechSuperShow, will explore how AI, machine learning, and automation are being used throughout a host of vertical markets and are rapidly shaping the future of business.

Edited by

Erik Linask